Converting annotations to semantic segmentation mask images¶

Overview:

The DSA database stores annotations in an (x,y) coordinate list format. For many tasks that process annotation data like training machine learning algorithms or measuring interobserver agreement a mask image representation where pixel values encode ground truth information is more useful.

This notebook demonstrates tools to convert annotations into semantic segmentation mask images. There are two approaches for generating these images:

Generate a mask image from a region defined by user-specified coordinates.

Generate mask images from annotations contained within region-of-interest (ROI) annotations. This involves mapping annotations to these ROIs and creating one image per ROI.

The examples below extend approaches described in Amgad et al, 2019:

Mohamed Amgad, Habiba Elfandy, Hagar Hussein, …, Jonathan Beezley, Deepak R Chittajallu, David Manthey, David A Gutman, Lee A D Cooper, Structured crowdsourcing enables convolutional segmentation of histology images, Bioinformatics, , btz083, https://doi.org/10.1093/bioinformatics/btz083

A csv file like the one in histomicstk/annotations_and_masks/tests/test_files/sample_GTcodes.csv is needed to define what group each pixel value corresponds to in the mask image, to define the overlay order of various annotation groups, and to define which groups represent ROIs. Note that the term “group” here comes from the annotation model where each group represents a class like “tumor” or “necrosis” and is associated with an annotation style.

The code demonstrated in this notebook extends functionality of the current API endpoints that get annotations as a list of dictionaries, including handing the following complex situations:

Getting RGB and mask images at the same magnification/resolution

User-defined regions to query, including cropping of annotations to defined region

Retrieving annotations from ROIs, including rotated rectangles and polygons

Overlapping annotations

“Background” class (eg. anything not-otherwise-specified is stroma)

Getting contours (coordinate lists in ROI frame) and bounding boxes relative to images at the same resolution, to be used to trainign object localization models like Faster-RCNN.

There are four run modes:

wsi: get scaled up/down version of mask of whole slide

min_bounding_box: get minimum box for all annotations in slide

manual_bounds: use given xy coordinate bounds provided by the ‘bounds’ param

polygonal_bounds: use manually-drawn polygonal (or rectangular) ROI boundaries

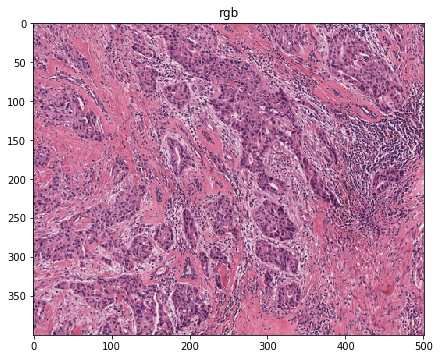

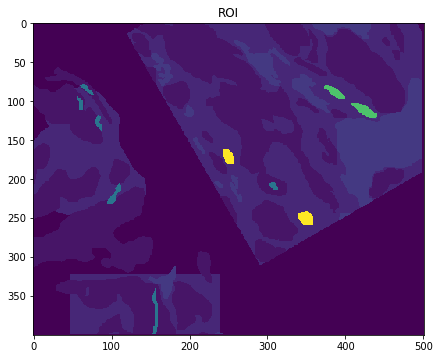

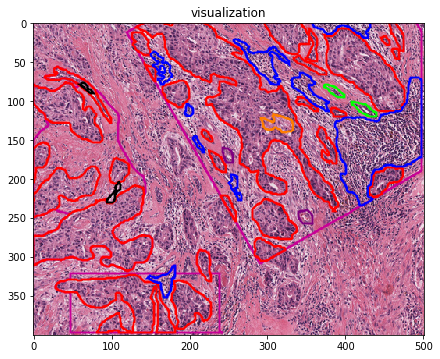

Here is a sample result:

Source annotations

Corresponding masks

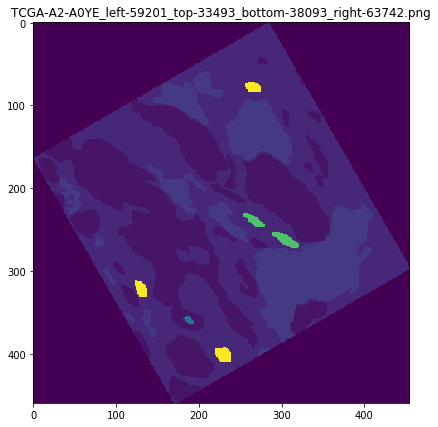

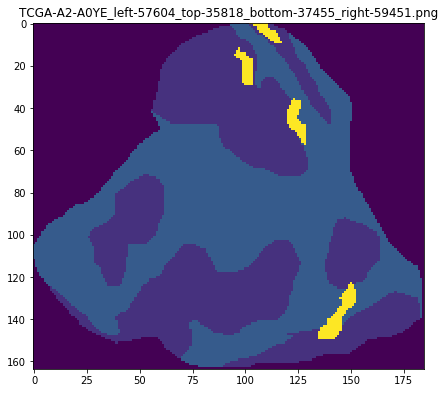

If the polygonal_bounds mode is used though its wrapper function (see jupyter), the result is saved mask files named something like: TCGA-A2-A0YE_left-59201_top-33493_bottom-63742_right-38093.png

Where to look?

|_ histomicstk/

|_annotations_and_masks/

| |_annotation_and_mask_utils.py

| |_annotations_to_masks_handler.py

|_tests/

|_ test_annotation_and_mask_utils.py

|_ test_annotations_to_masks_handler.py

[5]:

import os

import girder_client

from pandas import DataFrame, read_csv

import tempfile

from imageio import imread

import matplotlib.pyplot as plt

%matplotlib inline

from histomicstk.annotations_and_masks.annotation_and_mask_utils import (

get_bboxes_from_slide_annotations,

scale_slide_annotations, get_scale_factor_and_appendStr)

from histomicstk.annotations_and_masks.annotations_to_masks_handler import (

get_image_and_mask_from_slide, get_all_rois_from_slide)

#Some nice default configuration for plots

plt.rcParams['figure.figsize'] = 7, 7

titlesize = 16

Connect girder client and set analysis parameters¶

[2]:

CWD = os.getcwd()

APIURL = 'http://candygram.neurology.emory.edu:8080/api/v1/'

SAMPLE_SLIDE_ID = '5d586d57bd4404c6b1f28640'

GTCODE_PATH = os.path.join(

CWD, '../../tests/test_files/sample_GTcodes.csv')

# connect to girder client

gc = girder_client.GirderClient(apiUrl=APIURL)

# gc.authenticate(interactive=True)

gc.authenticate(apiKey='kri19nTIGOkWH01TbzRqfohaaDWb6kPecRqGmemb')

# just a temp directory to save masks for now

BASE_SAVEPATH = tempfile.mkdtemp()

SAVEPATHS = {

'ROI': os.path.join(BASE_SAVEPATH, 'masks'),

'rgb': os.path.join(BASE_SAVEPATH, 'rgbs'),

'contours': os.path.join(BASE_SAVEPATH, 'contours'),

'visualization': os.path.join(BASE_SAVEPATH, 'vis'),

}

for _, savepath in SAVEPATHS.items():

os.mkdir(savepath)

# What resolution do we want to get the images at?

# Microns-per-pixel / Magnification (either or)

MPP = 2.5 # <- this roughly translates to 4x magnification

MAG = None

Let’s inspect the ground truth codes file¶

This contains the ground truth codes and information dataframe. This is a dataframe that is indexed by the annotation group name and has the following columns:

group: group name of annotation (string), eg. “mostly_tumor”overlay_order: int, how early to place the annotation in the mask. Larger values means this annotation group is overlaid last and overwrites whatever overlaps it.GT_code: int, desired ground truth code (in the semantic segmentation mask) Pixels of this value belong to corresponding group (class)is_roi: Flag for whether this group marks ‘special’ annotations that encode the ROI boundaryis_background_class: Flag, whether this group is the default fill value inside the ROI. For example, you may decide that any pixel inside the ROI is considered stroma.

NOTE:

Zero pixels have special meaning and do NOT encode specific ground truth class. Instead, they simply mean ‘Outside ROI’ and should be IGNORED during model training or evaluation.

[3]:

# read GTCodes file

GTCodes = read_csv(GTCODE_PATH)

GTCodes.index = GTCodes.loc[:, 'group']

[4]:

GTCodes

[4]:

| group | overlay_order | GT_code | is_roi | is_background_class | color | comments | |

|---|---|---|---|---|---|---|---|

| group | |||||||

| roi | roi | 0 | 254 | 1 | 0 | rgb(200,0,150) | NaN |

| evaluation_roi | evaluation_roi | 0 | 253 | 1 | 0 | rgb(255,0,0) | NaN |

| mostly_tumor | mostly_tumor | 1 | 1 | 0 | 0 | rgb(255,0,0) | core class |

| mostly_stroma | mostly_stroma | 2 | 2 | 0 | 1 | rgb(255,125,0) | core class |

| mostly_lymphocytic_infiltrate | mostly_lymphocytic_infiltrate | 1 | 3 | 0 | 0 | rgb(0,0,255) | core class |

| necrosis_or_debris | necrosis_or_debris | 1 | 4 | 0 | 0 | rgb(255,255,0) | core class |

| glandular_secretions | glandular_secretions | 1 | 5 | 0 | 0 | rgb(0,255,0) | secondary class |

| mostly_blood | mostly_blood | 1 | 6 | 0 | 0 | rgb(128,0,128) | secondary class |

| exclude | exclude | 3 | 7 | 0 | 0 | rgb(0,0,0) | secondary class |

| metaplasia_NOS | metaplasia_NOS | 1 | 8 | 0 | 0 | rgb(255,0,0) | secondary class |

| mostly_fat | mostly_fat | 1 | 9 | 0 | 0 | rgb(0,0,0) | secondary class |

| mostly_plasma_cells | mostly_plasma_cells | 1 | 10 | 0 | 0 | rgb(0,0,255) | secondary class |

| other_immune_infiltrate | other_immune_infiltrate | 1 | 11 | 0 | 0 | rgb(0,0,255) | secondary class |

| mostly_mucoid_material | mostly_mucoid_material | 1 | 12 | 0 | 0 | rgb(0,255,0) | secondary class |

| normal_acinus_or_duct | normal_acinus_or_duct | 1 | 13 | 0 | 0 | rgb(0,255,0) | secondary class |

| lymphatics | lymphatics | 1 | 14 | 0 | 0 | rgb(0,255,0) | secondary class |

| undetermined | undetermined | 1 | 15 | 0 | 0 | rgb(0,0,0) | secondary class |

| nerve | nerve | 1 | 16 | 0 | 0 | rgb(0,0,0) | secondary class |

| skin_adnexia | skin_adnexia | 1 | 17 | 0 | 0 | rgb(0,0,0) | secondary class |

| blood_vessel | blood_vessel | 1 | 18 | 0 | 0 | rgb(128,0,128) | secondary class |

| angioinvasion | angioinvasion | 1 | 19 | 0 | 0 | rgb(128,0,128) | secondary class |

| mostly_dcis | mostly_dcis | 1 | 20 | 0 | 0 | rgb(0,0,0) | secondary class |

| other | other | 1 | 21 | 0 | 0 | rgb(0,0,0) | secondary class |

Generate mask image from user-defined coordinates¶

This section generates a mask provided coordinates of a region in a whole-slide image. Depending on the run mode used, these bounds could be determined automatically (eg enclosing all manually drawn annotations) or defined using the function parameters. The method output includes the RGB image of the defined region, the corresponding semantic segmentation mask, xy coordinates of annotations relative to the mask, and a visualization overlay of the annotations on the RGB image that mimics the HistomicsUI visualization of the region.

The function get_image_and_mask_from_slide() implements mask generation.

[2]:

print(get_image_and_mask_from_slide.__doc__)

Parse region from the slide and get its corresponding labeled mask.

This is a wrapper around get_roi_mask() which should be referred to for

implementation details.

Parameters

-----------

gc : object

girder client object to make requests, for example:

gc = girder_client.GirderClient(apiUrl = APIURL)

gc.authenticate(interactive=True)

slide_id : str

girder id for item (slide)

GTCodes_dict : dict

the ground truth codes and information dict.

This is a dict that is indexed by the annotation group name and

each entry is in turn a dict with the following keys:

- group: group name of annotation (string), eg. mostly_tumor

- overlay_order: int, how early to place the annotation in the

mask. Larger values means this annotation group is overlaid

last and overwrites whatever overlaps it.

- GT_code: int, desired ground truth code (in the mask)

Pixels of this value belong to corresponding group (class)

- is_roi: Flag for whether this group encodes an ROI

- is_background_class: Flag, whether this group is the default

fill value inside the ROI. For example, you may decide that

any pixel inside the ROI is considered stroma.

MPP : float or None

Microns-per-pixel -- best use this as it's more well-defined than

magnification which is more scanner/manufacturer specific.

MPP of 0.25 often roughly translates to 40x

MAG : float or None

If you prefer to use whatever magnification is reported in slide.

If neither MPP or MAG is provided, everything is retrieved without

scaling at base (scan) magnification.

mode : str

This specifies which part of the slide to get the mask from. Allowed

modes include the following

- wsi: get scaled up/down version of mask of whole slide

- min_bounding_box: get minimum box for all annotations in slide

- manual_bounds: use given ROI bounds provided by the 'bounds' param

- polygonal_bounds: use the idx_for_roi param to get coordinates

bounds : dict or None

if not None, has keys 'XMIN', 'XMAX', 'YMIN', 'YMAX' for slide

region coordinates (AT BASE MAGNIFICATION) to get labeled image

(mask) for. Use this with the 'manual_bounds' run mode.

idx_for_roi : int

index of ROI within the element_infos dataframe.

Use this with the 'polygonal_bounds' run mode.

slide_annotations : list or None

Give this parameter to avoid re-getting slide annotations. If you do

provide the annotations, though, make sure you have used

scale_slide_annotations() to scale them up/down by sf BEFOREHAND.

element_infos : pandas DataFrame.

The columns annidx and elementidx

encode the dict index of annotation document and element,

respectively, in the original slide_annotations list of dictionaries.

This can be obained by get_bboxes_from_slide_annotations() method.

Make sure you have used scale_slide_annotations().

get_roi_mask_kwargs : dict

extra kwargs for get_roi_mask()

get_contours_kwargs : dict

extra kwargs for get_contours_from_mask()

linewidth : float

visualization line width

get_rgb: bool

get rgb image?

get_contours : bool

get annotation contours? (relative to final mask)

get_visualization : bool

get overlaid annotation bounds over RGB for visualization

tau : int

maximum difference (in pixels) between fetched image and mask allowed.

Above this threshold, an error is raised indicating you may have some

problem in your parameters or elsewhere. If the difference is less then

tau, the rgb image and mask are resized to match each other before

being returned

Returns

--------

dict

Results dict containing one or more of the following keys

bounds: dict of bounds at scan magnification

ROI - (mxn) labeled image (mask)

rgb - (mxnx3 np array) corresponding rgb image

contours - list, each entry is a dict version of a row from the output

of masks_to_annotations_handler.get_contours_from_mask()

visualization - (mxnx3 np array) visualization overlay

More input parameters¶

[6]:

# other params

get_roi_mask_kwargs = {

'iou_thresh': 0.0,

'crop_to_roi': True,

'use_shapely': True,

'verbose': False,

}

get_contours_kwargs = {

'groups_to_get': None,

'roi_group': 'roi',

'get_roi_contour': True,

'discard_nonenclosed_background': True,

'background_group': 'mostly_stroma',

'MIN_SIZE': 10, 'MAX_SIZE': None,

'verbose': False, 'monitorPrefix': '',

}

# params for get_image_and_mask_from_slide()

get_kwargs = {

'gc': gc, 'slide_id': SAMPLE_SLIDE_ID,

'GTCodes_dict': GTCodes.T.to_dict(),

'MPP': MPP,

'MAG': MAG,

'get_roi_mask_kwargs': get_roi_mask_kwargs,

'get_contours_kwargs': get_contours_kwargs,

'get_rgb': True,

'get_contours': True,

'get_visualization': True,

}

1. manual_bounds mode¶

As you’ve seen in documentation, this method has four run modes, which determines where to get semantic segmentation masks and other behaviors. Here we test the basic manual_bounds mode, where you just give the boundaries (at base/scan magnification) of the annotations you want. Of course everything will be scaled to the desired resolution.

[7]:

get_kwargs['bounds'] = {

'XMIN': 58000, 'XMAX': 63000,

'YMIN': 35000, 'YMAX': 39000,

}

[8]:

# get specified region, let the method get and scale annotations

roi_out = get_image_and_mask_from_slide(mode='manual_bounds', **get_kwargs)

/home/mtageld/Desktop/HistomicsTK/histomicstk/annotations_and_masks/annotation_and_mask_utils.py:668: RuntimeWarning: invalid value encountered in greater

iou = iou[:, iou[1, :] > iou_thresh].astype(int)

The result is a a dictionary describing the region location in the slide, a semantic segmentation mask image, an RGB image of the region, contours (lists of annotation coordinates relative to region frame) and a visualization:

[9]:

roi_out.keys()

[9]:

dict_keys(['bounds', 'rgb', 'ROI', 'contours', 'visualization'])

The dictionary roi_out['bounds'] can be used to convert the coordinates in contours from the region frame back to the slide frame

[10]:

roi_out['bounds']

[10]:

{'XMIN': 58000, 'XMAX': 63000, 'YMIN': 35000, 'YMAX': 39000}

[11]:

for imstr in ['rgb', 'ROI', 'visualization']:

plt.imshow(roi_out[imstr])

plt.title(imstr)

plt.show()

[12]:

DataFrame(roi_out['contours']).head()

[12]:

| group | color | ymin | ymax | xmin | xmax | has_holes | touches_edge-top | touches_edge-left | touches_edge-bottom | touches_edge-right | coords_x | coords_y | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | roi | rgb(200,0,150) | 292.0 | 398.0 | 29.0 | 243.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 214,212,212,211,211,210,210,211,211,212,212,21... | 292,294,295,296,299,300,310,311,312,313,314,31... |

| 1 | roi | rgb(200,0,150) | 57.0 | 319.0 | 0.0 | 153.0 | 0.0 | 0.0 | 1.0 | 0.0 | 0.0 | 31,30,29,27,27,28,29,30,31,31,29,22,21,16,15,1... | 57,58,58,60,64,65,64,64,65,66,68,68,69,69,70,7... |

| 2 | roi | rgb(200,0,150) | 0.0 | 309.0 | 120.0 | 498.0 | 0.0 | 1.0 | 0.0 | 0.0 | 0.0 | 143,142,141,140,139,138,137,135,134,133,132,13... | 0,1,1,2,2,3,3,5,5,6,6,7,7,9,9,10,10,11,11,13,1... |

| 3 | mostly_tumor | rgb(255,0,0) | 331.0 | 393.0 | 177.0 | 228.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 185,184,180,180,181,181,182,182,181,181,180,18... | 331,332,332,333,334,336,337,342,343,344,345,34... |

| 4 | mostly_tumor | rgb(255,0,0) | 324.0 | 398.0 | 29.0 | 182.0 | 1.0 | 0.0 | 0.0 | 0.0 | 0.0 | 123,122,120,119,112,111,108,107,94,93,74,73,63... | 324,325,325,326,326,327,327,328,328,329,329,33... |

Note that if we were to use the above function call repeatedly for the same slide (eg to get tiles or multiple regions of interest), this would repeatedly use a get request to obtain the annotations from the server, which is inefficient. Instead, if we know we’ll be using this repeatedly for the same slide, we manually get annotations and scale them down/up to desired resolution, and pass them to get_image_and_mask_from_slide() method. Here’s how this could be done.

[13]:

# get annotations for slide

slide_annotations = gc.get('/annotation/item/' + SAMPLE_SLIDE_ID)

# scale up/down annotations by a factor

sf, _ = get_scale_factor_and_appendStr(

gc=gc, slide_id=SAMPLE_SLIDE_ID, MPP=MPP, MAG=MAG)

slide_annotations = scale_slide_annotations(slide_annotations, sf=sf)

# get bounding box information for all annotations

element_infos = get_bboxes_from_slide_annotations(slide_annotations)

[14]:

# get specified region -- manually providing scaled annotations

roi_out = get_image_and_mask_from_slide(

mode='manual_bounds', slide_annotations=slide_annotations,

element_infos=element_infos, **get_kwargs)

/home/mtageld/Desktop/HistomicsTK/histomicstk/annotations_and_masks/annotation_and_mask_utils.py:668: RuntimeWarning: invalid value encountered in greater

iou = iou[:, iou[1, :] > iou_thresh].astype(int)

[15]:

roi_out['bounds']

[15]:

{'XMIN': 58000, 'XMAX': 63000, 'YMIN': 35000, 'YMAX': 39000}

2. min_bounding_box mode¶

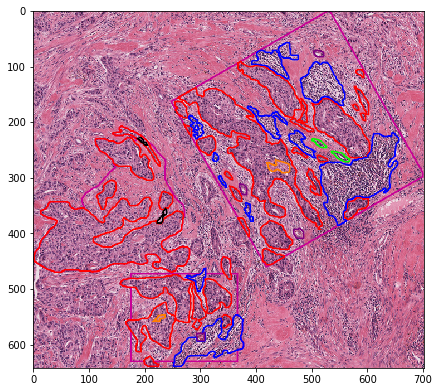

If you don’t care about any “special” ROI annotations and would just like to parse everything in the slide into a ‘super’ minimum-bounding-box semantic segmentation mask, use the min_bounding_box mode as follows.

[16]:

# get ROI bounding everything

minbbox_out = get_image_and_mask_from_slide(

mode='min_bounding_box', slide_annotations=slide_annotations,

element_infos=element_infos, **get_kwargs)

[17]:

minbbox_out['bounds']

[17]:

{'XMIN': 56736, 'YMIN': 33493, 'XMAX': 63742, 'YMAX': 39900}

[18]:

plt.imshow(minbbox_out['visualization'])

[18]:

<matplotlib.image.AxesImage at 0x7fece09599e8>

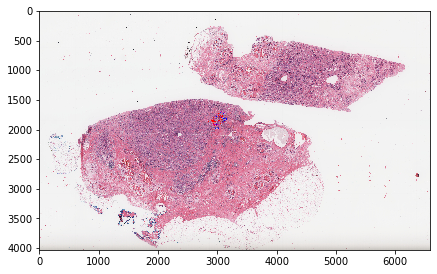

3. wsi mode¶

If you just want a miniature version of the slide and all its annotations, usine this mode.

[19]:

# get entire wsi region

get_kwargs['MPP'] = 5.0 # otherwise it's too large!

wsi_out = get_image_and_mask_from_slide(

mode='wsi', **get_kwargs)

[20]:

wsi_out['bounds']

[20]:

{'XMIN': 0, 'XMAX': 131536, 'YMIN': 0, 'YMAX': 80453}

[21]:

plt.imshow(wsi_out['visualization'])

plt.show()

[22]:

plt.imshow(wsi_out['visualization'][1650:2000, 2800:3300])

plt.show()

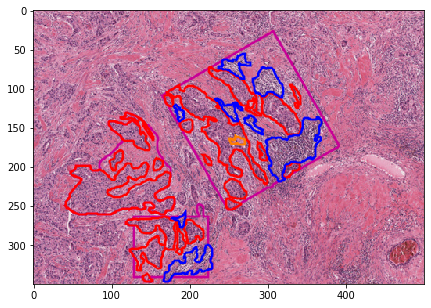

Generate masks for ROIs¶

This method generates masks for regions defined by ROI annotations (defined groups in the ground truth codes). Annotated structures are mapped to these ROIs, and then each ROI is converted into a single semantic segmentation mask image.

This is the core method you’ll want to use¶

[6]:

print(get_all_rois_from_slide.__doc__)

Parse annotations and saves ground truth masks for ALL ROIs.

Get all ROIs in a single slide. This is mainly uses

get_image_and_mask_from_slide(), which should be referred to

for implementation details.

Parameters

-----------

gc : object

girder client object to make requests, for example:

gc = girder_client.GirderClient(apiUrl = APIURL)

gc.authenticate(interactive=True)

slide_id : str

girder id for item (slide)

GTCodes_dict : dict

the ground truth codes and information dict.

This is a dict that is indexed by the annotation group name and

each entry is in turn a dict with the following keys:

- group: group name of annotation (string), eg. mostly_tumor

- overlay_order: int, how early to place the annotation in the

mask. Larger values means this annotation group is overlaid

last and overwrites whatever overlaps it.

- GT_code: int, desired ground truth code (in the mask)

Pixels of this value belong to corresponding group (class)

- is_roi: Flag for whether this group encodes an ROI

- is_background_class: Flag, whether this group is the default

fill value inside the ROI. For example, you may decide that

any pixel inside the ROI is considered stroma.

save_directories : dict

paths to directories to save data. Each entry is a string, and the

following keys are allowed

- ROI: path to save masks (labeled images)

- rgb: path to save rgb images

- contours: path to save annotation contours

- visualization: path to save rgb visualization overlays

get_image_and_mask_from_slide_kwargs : dict

kwargs to pass to get_image_and_mask_from_slide()

default values are assigned if speceific parameters are not given.

max_roiside : int or None

If int, this is the maximum allowed side for a downloaded region. If

a region-of-interest is larger than this size, then it is tiled into

non-overlapping regions who maximal side is max_roiside and downloaded.

If None, the ROI is downloaded as-is, even if it was extremely large.

If you know your slides have very large ROI annotations, the safer

option is to set a max_roiside. A good value may be 5000-8000 pixels.

slide_name : str or None

If not given, it's inferred using a server request using girder client.

verbose : bool

Print progress to screen?

monitorPrefix : str

text to prepend to printed statements

Returns

--------

list of dicts

each entry contains the following keys

- ROI: path to saved mask (labeled image)

- rgb: path to saved rgb image

- contours: path to saved annotation contours

- visualization: path to saved rgb visualization overlay

[24]:

detailed_kwargs = {

'MPP': MPP,

'MAG': None,

'get_roi_mask_kwargs': get_roi_mask_kwargs,

'get_contours_kwargs': get_contours_kwargs,

'get_rgb': True,

'get_contours': True,

'get_visualization': True,

}

The parameter get_roi_mask_kwargs (which is one of the keys of the parameter get_image_and_mask_from_slide_kwargs) is a dictionary of kwargs that is eventually passed on to get_roi_mask(). Watch out for the following parameters:

crop_to_roi- flag of whether to crop polygons to roi (prevent ‘overflow’ beyond roi edge)use_shapely- flag of whether to precisely determine whether an element belongs to an ROI using shapely polygons. Ever-so-slightly slower. If set to False, overlapping bounding box is used as a cheap but less precise indicator of inclusion.

Also note that, as the docstring explains, you can set max_roiside to some integer value so that large ROIs would be tiled to prevent memory errors.

[25]:

savenames = get_all_rois_from_slide(

gc=gc, slide_id=SAMPLE_SLIDE_ID, GTCodes_dict=GTCodes.T.to_dict(),

save_directories=SAVEPATHS,

get_image_and_mask_from_slide_kwargs=detailed_kwargs,

max_roiside=None,

slide_name='TCGA-A2-A0YE', verbose=False)

[26]:

savenames[0]

[26]:

{'ROI': '/tmp/tmpf7ch4pl6/masks/TCGA-A2-A0YE_left-59201_top-33493_bottom-38093_right-63742.png',

'rgb': '/tmp/tmpf7ch4pl6/rgbs/TCGA-A2-A0YE_left-59201_top-33493_bottom-38093_right-63742.png',

'visualization': '/tmp/tmpf7ch4pl6/vis/TCGA-A2-A0YE_left-59201_top-33493_bottom-38093_right-63742.png',

'contours': '/tmp/tmpf7ch4pl6/contours/TCGA-A2-A0YE_left-59201_top-33493_bottom-38093_right-63742.csv'}

Let’s visualize the ROIs created¶

[27]:

for savename in savenames:

vis = imread(savename['ROI'])

plt.imshow(vis)

plt.title(os.path.basename(savename['ROI']))

plt.show()